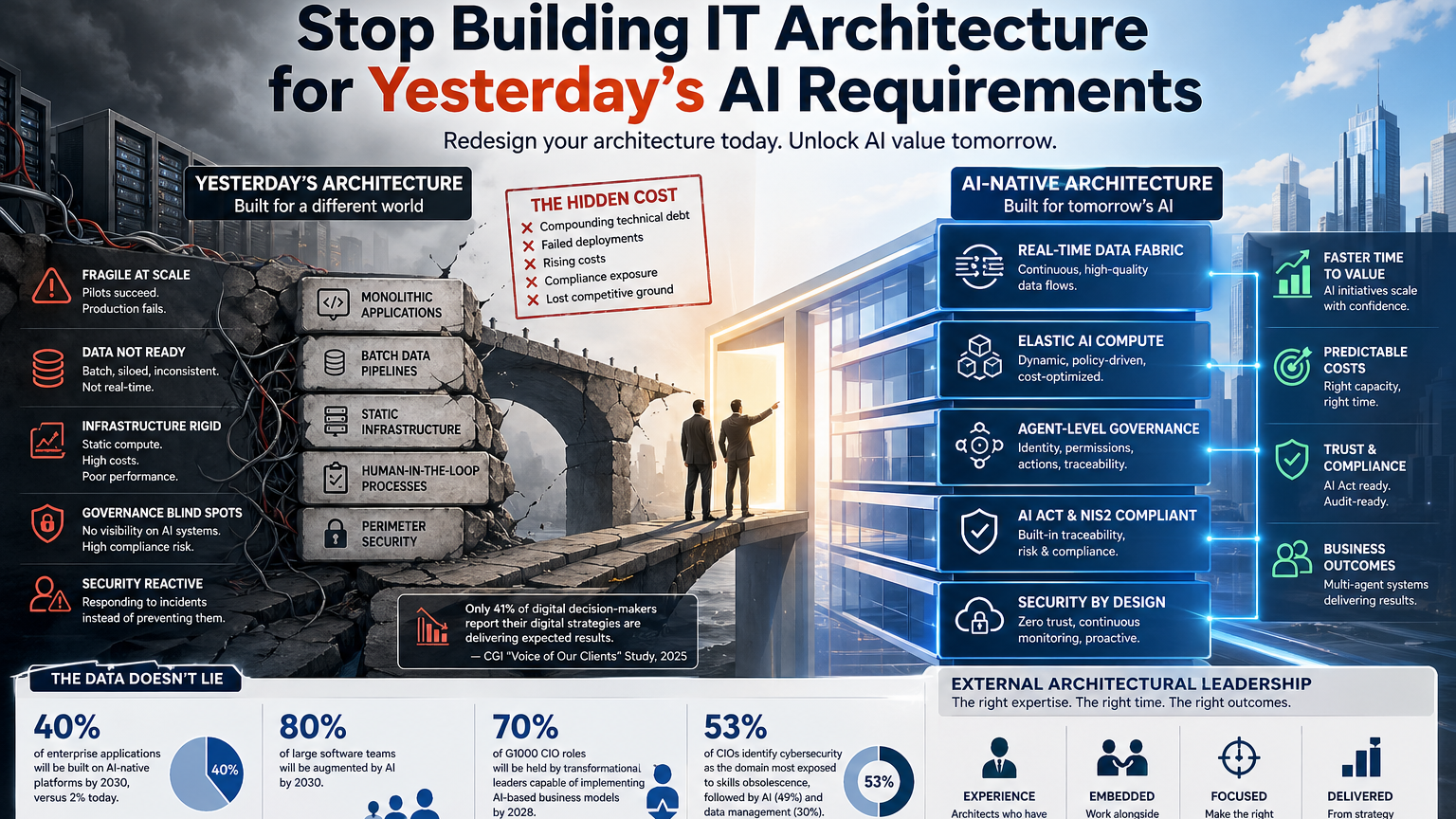

Most enterprise IT architectures were designed before AI-native platforms existed. By 2030, 40% of enterprise applications will run on AI-native platforms (Gartner, 2026), yet only 2% do today. Organizations that delay structural redesign now are not falling behind gradually — they are locking in technical debt that compounds with every deployment cycle.

Introduction

There is a version of this conversation happening in boardrooms across France right now. A CIO walks in with a roadmap built around cloud consolidation, API modernization, and incremental AI integration. The executive committee pushes back: where are the AI results? Why is the competition moving faster? The honest answer — one that is rarely said out loud — is that the architecture underneath the AI ambition was never designed to support it.

This is not a failure of vision. It is a structural mismatch. The information systems that power most established French organizations were built for a different era: stable workloads, predictable data flows, human-in-the-loop decision cycles. AI-native development breaks every one of those assumptions. It demands real-time data pipelines, dynamic compute allocation, multi-agent orchestration, and governance frameworks that did not exist three years ago.

The gap between where most IT architectures are today and where AI-native operations require them to be is not a gap you close with a sprint. It requires deliberate, expert-led structural redesign. This article explains what that redesign looks like, why it exceeds the capacity of most internal teams, and what the cost of delay actually is.

- The architecture gap nobody is talking about openly

- What does “AI-native” actually require from your infrastructure?

- Why internal teams consistently hit the ceiling on this

- How do you know your current architecture is actively blocking AI value?

- What external architectural leadership actually looks like in practice

- Conclusion

1. The architecture gap nobody is talking about openly

Gartner’s 2026 projections are stark: by 2030, 80% of large software teams will be augmented by AI, and 40% of enterprise applications will be built on AI-native platforms — compared to just 2% today. That is not an incremental shift. That is a near-complete replacement of the development and operational model most organizations currently rely on.

The problem is that most IT leaders are trying to bridge this gap by layering AI capabilities onto existing architecture. A copilot here, a machine learning module there, an API connection to a foundation model. It looks like progress on a slide deck. In practice, it creates fragility. The underlying data pipelines were not designed for the volume, velocity, or variability that AI workloads generate. The security perimeter was not built to handle autonomous agents making decisions and triggering actions. The governance model assumes human review at every step — a model that collapses under agentic AI.

The MAGNum 2026 framework, published in March 2026 by L’Usine Digitale, identifies this structural misalignment explicitly. Its thirteen governance vectors — spanning architecture, data and AI, risk and compliance, and portfolio management — reflect a recognition that the SI is no longer a support function. It is the strategic core of the organization. Designing it as if it were still a back-office utility is not just a technical error. It is a strategic one.

What makes this particularly difficult is that the gap is invisible until something breaks. Teams ship AI features, demos work, pilots succeed. Then the system hits production scale and the cracks appear: latency spikes, data inconsistencies, compliance exposures, cost overruns on compute. By that point, the architecture decisions that caused the problem were made months earlier — and unwinding them is expensive.

2. What does “AI-native” actually require from your infrastructure?

AI-native is not a marketing label. It describes a specific set of architectural requirements that differ fundamentally from traditional enterprise IT design. Understanding those requirements is the first step toward an honest assessment of where your current system stands.

At the data layer, AI-native systems require continuous, high-quality data flows — not batch exports or periodic synchronization. Models need access to fresh, contextualized data in near real-time. This means rethinking ETL pipelines, data contracts between systems, and the governance of data quality at the source. According to IDC’s CIO Summit France findings, architecture, data, and governance are the three blind spots most responsible for failed AI deployments in enterprise environments. That is not a coincidence — they are the exact layers that traditional IT architecture treats as secondary concerns.

At the compute layer, AI workloads are fundamentally non-linear. A single agentic workflow can spike GPU demand by an order of magnitude within seconds, then drop to near-zero. Static infrastructure provisioning — the default model for most on-premise and hybrid environments — cannot handle this gracefully. Organizations that have not moved to dynamic, policy-driven compute allocation are paying for capacity they do not use and failing to deliver capacity when they need it.

At the security and governance layer, the challenge is more complex still. Agentic AI systems — where models take autonomous actions, call external APIs, write to databases, and trigger downstream processes — require a governance model that tracks identity, permissions, and actions at the agent level. The AI Act, fully applicable from 2 August 2026, mandates exactly this kind of traceability. As Frédéric Brajon of Saegus noted, “many organizations do not yet have centralized governance capable of maintaining an exhaustive view of all AI systems deployed — whether built internally, purchased, or embedded.” That is a compliance exposure, not just a technical gap.

Gartner frames the DSI’s evolving role around three archetypes: the Architect who builds AI-native foundations, the Synthesizer who turns multi-agent systems into measurable business outcomes, and the Sentinel who ensures trust, sovereignty, and preemptive security. Most IT organizations are currently operating in none of these modes with any consistency.

- 40% of enterprise applications will be built on AI-native platforms by 2030, versus 2% today — Gartner / IT for Business, 2026

- 80% of large software teams will be augmented by AI by 2030 — Gartner / IT for Business, 2026

- 70% of G1000 CIO roles will be held by transformational leaders capable of implementing AI-based business models by 2028 — IDC CIO Summit France

- Only 41% of digital decision-makers report that their digital strategies are producing the expected results — CGI “Voice of Our Clients” study, 2025

- 53% of CIOs identify cybersecurity as the domain most exposed to skills obsolescence, followed by AI (49%) and data management (30%) — IT Skills Obsolescence Barometer, Journal du Net, 2026

3. Why internal teams consistently hit the ceiling on this

This is the part that is hardest to say directly, but field experience makes it unavoidable: the teams responsible for maintaining your current architecture are often the least positioned to redesign it. Not because they lack talent — they do not. But because they are fully committed to keeping the existing system running, and because the expertise required for AI-native architectural redesign is genuinely scarce.

The IT Skills Obsolescence Barometer (Journal du Net, January 2026) puts numbers to this: 53% of CIOs identify cybersecurity as the domain most exposed to skills obsolescence, followed by AI at 49% and data management at 30%. These are not peripheral domains. They are the exact competencies required to design and operate an AI-native architecture. And 69% of CIOs consider skills access a major challenge, according to McKinsey data cited by Journal du Net.

The structural problem runs deeper than hiring. Even organizations that recruit aggressively for AI and data engineering talent find that those profiles — when they exist — are absorbed into project delivery rather than architectural design. There is a difference between an engineer who implements a feature on an AI platform and an architect who designs the platform itself, defines the data contracts, establishes the agent governance model, and ensures the whole system is auditable under the AI Act. The latter profile is rare, expensive, and typically not available on the permanent headcount market.

This is the dynamic that leads to the situation Penon Partners encounters most frequently: an IT organization that has made genuine progress on AI adoption, but whose underlying architecture has not kept pace. The team has done what it could with the resources and expertise available. The gap is not a failure of effort — it is a failure of structural capacity. As we have explored in detail in our analysis of why AI projects fail and what external expertise can fix, the root cause is almost always architectural rather than technological.

4. How do you know your current architecture is actively blocking AI value?

The symptoms are recognizable, even if the diagnosis is not always immediate. Here are the patterns that consistently signal an architecture that has outgrown its design.

First: AI pilots succeed, production deployments fail. The pilot environment is controlled, the data is clean, the scope is narrow. Production introduces real-world complexity — data quality issues, integration failures, latency under load, security exceptions that block agent actions. If your AI projects consistently deliver in controlled conditions and struggle at scale, the architecture is the constraint.

Second: your data governance cannot answer basic questions about your AI systems. Under the AI Act’s requirements, you need to know — for every AI system in operation — what data it uses, what decisions it influences, what level of autonomy it has, and who is accountable. According to CGI’s 2025 “Voice of Our Clients” study, only 41% of decision-makers report that their digital strategies produce expected results. A significant part of that gap traces back to the inability to govern AI systems at the organizational level, not just the technical one.

Third: your compute costs are unpredictable and growing faster than your AI value. This is a direct signal of infrastructure that was not designed for AI workload patterns. Static provisioning, manual scaling, and absence of cost-per-inference tracking are architectural choices — and they have financial consequences that compound quickly as AI usage grows.

Fourth: your security team is operating reactively on AI risks rather than proactively. The 504,000 cybersecurity incident requests recorded by Cybermalveillance.gouv.fr in 2025, combined with a 38% increase in cyberattacks against French organizations (ANSSI), reflect an environment where AI systems — with their expanded attack surface and autonomous action capabilities — require proactive architectural security design, not incident response.

If two or more of these patterns are present, the architecture is not a background concern. It is an active blocker. This is consistent with what we have documented in our broader analysis of digital transformation in France in 2026: the organizations making real progress are those that addressed architectural foundations first, not last.

Key insights for IT and digital decision-makers:

– Layering AI capabilities onto legacy architecture creates compounding fragility — it is not a viable path to AI-native operations.

– The AI Act’s full application on 2 August 2026 makes agent-level governance and AI system traceability a compliance requirement, not a best practice.

– Skills obsolescence in AI, cybersecurity, and data management means internal teams cannot be expected to lead architectural redesign without external support.

– The organizations that are succeeding with AI at scale redesigned their data pipelines, compute allocation, and governance frameworks before scaling deployment — not after.

– External architectural leadership is most valuable when engaged before production failures occur, not as a rescue operation afterward.

5. What external architectural leadership actually looks like in practice

External architectural leadership is not a synonym for outsourcing. It is a specific engagement model: a senior architect with proven AI-native platform experience embeds within the organization, works alongside the internal team, and leads the structural redesign while transferring knowledge and capability throughout the process.

The value is not in the deliverables — the diagrams, the frameworks, the documentation. The value is in the decisions made correctly the first time. An architect who has designed AI-native data pipelines in comparable enterprise contexts knows where the failure modes are before they appear. They know which governance choices will create AI Act compliance problems at scale. They know how to design compute allocation policies that remain cost-predictable as AI usage grows. That pattern recognition is not something you build internally in six months — it comes from having done it before, in real production environments, under real pressure.

98% of French companies are expected to increase their AI budgets in 2026 (Journal du Net, January 2026). That investment will be wasted if the architectural foundations are not in place to support it. The CGI study finding — that only 41% of digital decision-makers achieve expected results from their digital strategies — reflects exactly this dynamic. Technology investment without architectural readiness does not produce results. It produces complexity.

At Penon Partners, the engagements that deliver the clearest value are those where the external architect is brought in at the design stage, not the rescue stage. The mandate is specific: assess the current architecture against AI-native requirements, identify the structural gaps, design the transition path, and lead implementation with the internal team. It is not a consulting assignment that ends with a report. It is an operational engagement that ends with a system that works — and a team that understands why. This is the kind of structural transformation we describe in our analysis of why transformation is no longer a project but a continuous discipline.

The organizations that are best positioned for this engagement are those where leadership has already recognized that the internal capacity ceiling has been reached — where the pressure is real, the budget is allocated, and the need is for a partner with the credibility and situational fit to reduce pressure rather than add to it.

Conclusion

The shift to AI-native architecture is not a future concern. It is a present-tense structural challenge with a hard regulatory deadline — the AI Act’s full application in August 2026 — and a competitive cost that compounds with every quarter of delay. The organizations that will extract real value from AI in the next three years are not the ones with the largest AI budgets. They are the ones that redesigned their architectural foundations before scaling deployment.

That redesign requires expertise that most internal teams do not currently have — not because they are not capable, but because the skills are genuinely scarce and the internal team is already fully committed to keeping existing systems operational. The case for external architectural leadership is not about distrust of internal talent. It is about structural honesty: some problems require a profile that does not exist on your permanent headcount, and the cost of pretending otherwise is measured in failed deployments, compliance exposures, and lost competitive ground.

If your AI initiatives are producing pilots but not production results, if your governance cannot answer basic questions about your AI systems, or if your compute costs are growing faster than your AI value — the architecture is the constraint. The right move is to address it directly, with the right expertise, before the gap widens further.

FAQ

Why do AI projects fail even when the technology is solid?

AI projects most often fail due to architectural misalignment, not technology choice. Only 41% of digital decision-makers report achieving expected results from their digital strategies (CGI, 2025). The root causes are typically inadequate data pipelines, absent agent governance, and infrastructure not designed for AI workload patterns. Fix the architecture first.

What is an AI-native IT architecture?

An AI-native architecture is designed from the ground up to support dynamic AI workloads — including real-time data pipelines, elastic compute allocation, and agent-level governance. Gartner projects that 40% of enterprise applications will run on AI-native platforms by 2030, versus 2% today. Most current enterprise architectures require structural redesign to reach this standard.

How does the EU AI Act affect IT architecture decisions?

The AI Act, fully applicable from 2 August 2026, requires organizations to document every AI system’s purpose, autonomy level, data sources, and accountability chain. This mandates agent-level governance and traceability built into the architecture — not added after deployment. Organizations without centralized AI system governance face direct compliance exposure.

When should a CIO bring in an external IT architect?

Bring in an external architect when internal teams have hit their capacity ceiling on structural redesign — typically signaled by AI pilots succeeding but production deployments failing. 69% of CIOs consider skills access a major challenge (McKinsey / Journal du Net, 2026). External architects with AI-native platform experience deliver pattern recognition that cannot be built internally in time.

What is the difference between AI integration and AI-native architecture?

AI integration adds AI capabilities onto existing infrastructure — copilots, API connections, ML modules. AI-native architecture redesigns the infrastructure itself to support AI as a primary workload. The distinction matters because integration creates compounding fragility at scale, while AI-native design ensures governance, cost predictability, and compliance are built in from the start.

How do you assess whether your IT architecture is blocking AI value?

Four signals indicate architectural constraint: AI pilots succeed but production deployments fail; data governance cannot answer basic AI Act compliance questions; compute costs grow faster than AI value; and security teams operate reactively on AI risks. If two or more apply, the architecture is an active blocker — not a background concern.

What does NIS2 add to the IT architecture challenge in France?

NIS2, with the ANSSI’s ReCyF framework published in March 2026, adds mandatory cybersecurity measures to an already complex architectural agenda. 74% of French SMEs fall below ANSSI’s recommended maturity level. For CIOs managing AI integration alongside NIS2 compliance, the architectural surface area — data, agents, APIs, compute — must be designed with security as a native property, not a retrofit.

Your architecture should be built for the AI you are deploying now — not the one you deployed two years ago.

Explore how we can help